TL;DR

AI lip sync lets you swap translated audio into an existing talking-head video without reshooting the creator. For ad teams, that means faster localization and more weekly creative tests. The catch is input quality: clean audio, a clear mouth view, and a review pass for timing drift matter more than over-tuning every model setting. EzUGC keeps that workflow tied to UGC-style ad production and roughly $5/video economics.

AI lip sync looks like a small feature until you realize what it does to your workflow.

If you can translate a script and generate new audio, you can ship a localized video without reshooting talent, booking studios, or begging creators for revisions.

If you run paid social, that means more creative tests per week.

The best ad teams don't win because they're artistic. They won because they ran more shots on goal.

How AI lip sync works in video with an audio track

An AI lip-sync app synchronizes mouth movements in video and audio tracks.

Not just lips either. The stronger systems also track lower-face motion (jaw), head movement, and timing-the stuff that makes a talking shot feel human instead of robotic.

At a high level, the model:

- Listens to the audio (or generated voice) over time.

- Generates or adjusts frames so the mouth motion matches the audio.

The right mouth shape matches the left mouth shape.

Why marketers care more than filmmakers about lip sync app technology.

The problem with lip sync apps is that they don't know what they're talking about.

Filmmakers obsess over "perfect." Marketers obsess over things fast enough to test.

If you can turn one winning ad into five localized variants this afternoon, you're ahead.

Do it consistently and you get a repeatable production loop, not a one-off translation project.

How AI lip sync helps video localization and marketing with lip sync

Video localization used to mean one of two painful options:

- Re-shoot the entire thing with new talent.

It's just a matter of translating the message while keeping the on-camera performance believable.

- The second option is to translate the message.

The message is just a message, but the message is still believable.

Where AI lip sync actually helps ad teams get the best results.

Video localization and translation is a great way to keep the "same person" on screen. It's easy to get the same person on screen, but it's hard to get that same person off screen.

Swap messaging by segment: "Hey Austin" vs. "Hey Miami," without filming 50 versions.

You can do this with your voiceover product demos and presentations.

You don't have to watch 50 versions of the same video. You just have to listen to the same voiceover.

The best way to make sure you have the right people on screen is to hire the right creators.

EzUGC AI UGC is ~$5/video, and the output is consistent (no flaky deliverables, no lighting roulette).

A practical note on deepfake risk management.

Lip-sync can be used responsibly (localization, accessibility, education).

It can also be used irresponsibly (impersonation). Have a policy, get consent, and don't be cute with trust.

If you're operating at scale, you should also understand detection and risk. Stanford HAI has a good primer on deepfake detection.

How AI lip-sync apps work from script to video out

The workflow looks simple on the outside: script in, video out.

Under the hood, it's a stack of models doing different jobs.

Machine learning is doing pattern matching at scale.

AI lip-sync models learn the relationship between:

- What a sound is (a phoneme)

- What a mouth should do (shape + timing)

They're trained on large datasets of aligned video and audio.

That's why you see quality jumps over time-the models get better at edge cases like accents, fast speech, and partial occlusion.

What is happening under the hood?

Deep neural networks can make facial motion predictions. They can make it look like the mouth is moving. They can also make it feel like it's moving. They just need to know that the mouth doesn't snap. They have to know what they're talking about. They need to be able to tell the mouth to "snap." They can do that. They don't have to. They're just going to get better and better. They'll just have to learn how to make it work. They

A practical AI lip sync workflow in plain English.

No magic. Mostly inputs

This is a practical AI lip-sync workflow. Just a simple lip sync with a few inputs. It's not magic, but it works. And it works really well.

1) Prepare your script or voice for a lip-sync app.

Editorial illustration for Steps to create videos with an AI lipsync app.2) Make sure you're syncing with the right app.3) Set up the app. 4) Set the app up and sync it. 5) Set

- Text script (then generate voice)

- Recorded audio (your voice, a VO artist, or a translated track)

For localization, you'll typically:

- Translate the script to the target language.

- Generate/record audio in the target languages.

- Lip-sync the face to match the script.

2) Start a new video project.

Keep the first test boring.

One face, one background, one message. You can get fancy after you've proven the pipeline works.

3) Choose your on-screen talent (avatar or real footage) and add audio to it.

Quality in = quality out.

If you're using avatars, pick one that fits your category (beauty, supplements, apps). If you're not using footage, choose a clip with a clear mouth view.

4) Enable lip sync and generate strength/sensitivity.

Most tools let you toggle lip sync, but you can also choose strength and sensitivity.5) Choose strength and choose strength.

Start with defaults. Over-tuning too early is how you lose an afternoon.

5) Customize, review, and export your review pass.

Do a brutal review pass:

- Does the mouth open on plosives (P/B/M) correctly?

- Do the teeth/tongue artifacts show up on certain words?

- If not, export it in the formats you need for ads. Then export in the format you need to export.

Tips to improve AI lip-sync quality and improve AI model quality

Most "bad lip sync" is not a model problem. It's a problem of model quality and model quality. We need to improve the quality of AI lip-syncing and improve it.

It's an input problem.

Get clean audio (this matters more than people admit).

- Use a decent mic.

- Use a good mic.

- Reduce background noise.

- Avoid heavy compression artifacts.

Speak clearly and concisely. If the model can't hear phonemes, it can't animate them.

Use footage that makes the mouth easy to read and see.

- Good lighting (no harsh shadows on the mouth)

- High resolution (at least enough to see teeth/lip edges)

- Avoid extreme angles (profile shots are harder).

Don't try to make the mouth look like it's not there.

Fine-tune only after you've confirmed the basics.

If your output is off, check these in order:

- Audio clarity (does the model start late?)

- Face size and visibility (does it start early?)

- Lip-sync strength settings (does your input look good?)

Don't debug the model when your input is junk.

Real uses of AI lip sync in video production.

This is already normal across categories.

The difference is whether you're using it for one-off "cool videos" or for a repeatable growth loop.

Where it shows up in the real world.

The future of AI lip sync and video localization is going to keep compounding. We need more variations without adding headcount. We need more variation without adding headcount. We want more variations. We want more variation. We don't need more languages. We just need more examples. We have a lot of examples. We can make them all work. We have lots of examples! We want to teach people how to do it. We don't want them to forget what they learned. We really need to teach them how to make it work.

Inputs matter more than settings. They don't matter anymore. They matter. They're not optional. They're optional. They've got to be done. We

- More realistic motion (less uncanny, fewer artifacts)

- More multilingual coverage (more languages, better accents)

- More automation (script-to-variant pipelines)

- More scripting (script-to-script pipelines)

For ad teams, the implication is simple.

The bottleneck moves from "can we produce?" to "do we have the taste to pick winners?"

When your goal is general video production, you'll find platforms that go wide-big avatar libraries, broad language coverage, and lots of use cases.

If your goal is UGC-style ad creative and rapid paid-social testing, go narrow and fast.

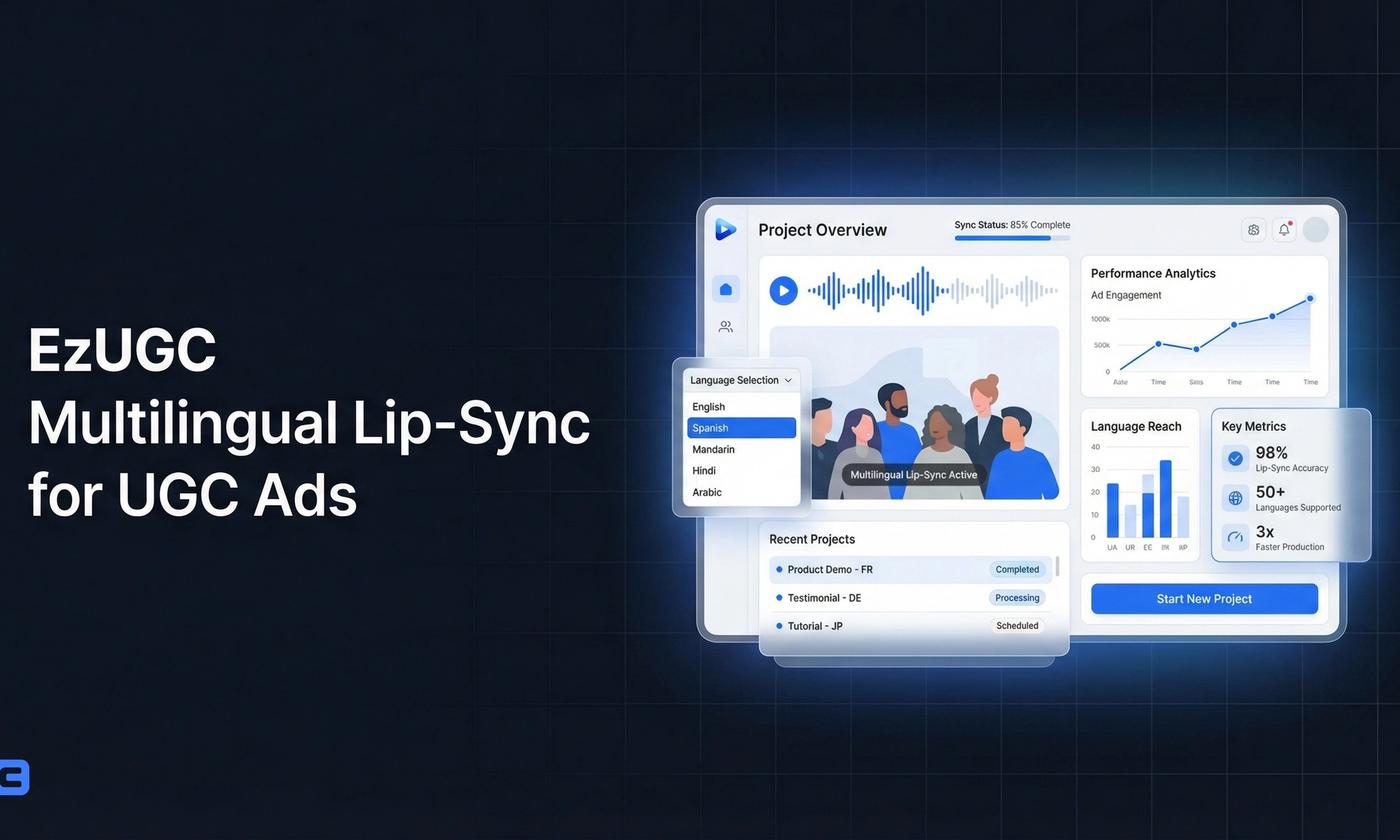

EzUGC is built for performance marketers and DTC teams who care about the following:

- Shipping ads in minutes, not days or weeks

- Consistency across variants and languages

- Realistic AI avatars and avatars

- Realistic avatars with real-world avatars.

- Realistic AF avatars in real-time.

- Real-time testing.

- Cost that makes testing rational: ~$5/video vs. the ~$200/video typical creator route.

Create your first variants here: EzUGC

Sources and citations

- Using AI to detect seemingly perfect deep-fake videos · Stanford HAI

Background on deepfake realism and detection - useful for understanding ethics and risk.

- AI in production research (ACM Digital Library) · ACM

Academic context on AI-assisted media workflows.

- Adoption of AI video generation tools (Applied Sciences) · MDPI

General research on AI video tools and their adoption.

Frequently asked questions

Direct answers pulled into the page to improve answer-first relevance and scanability.