TL;DR

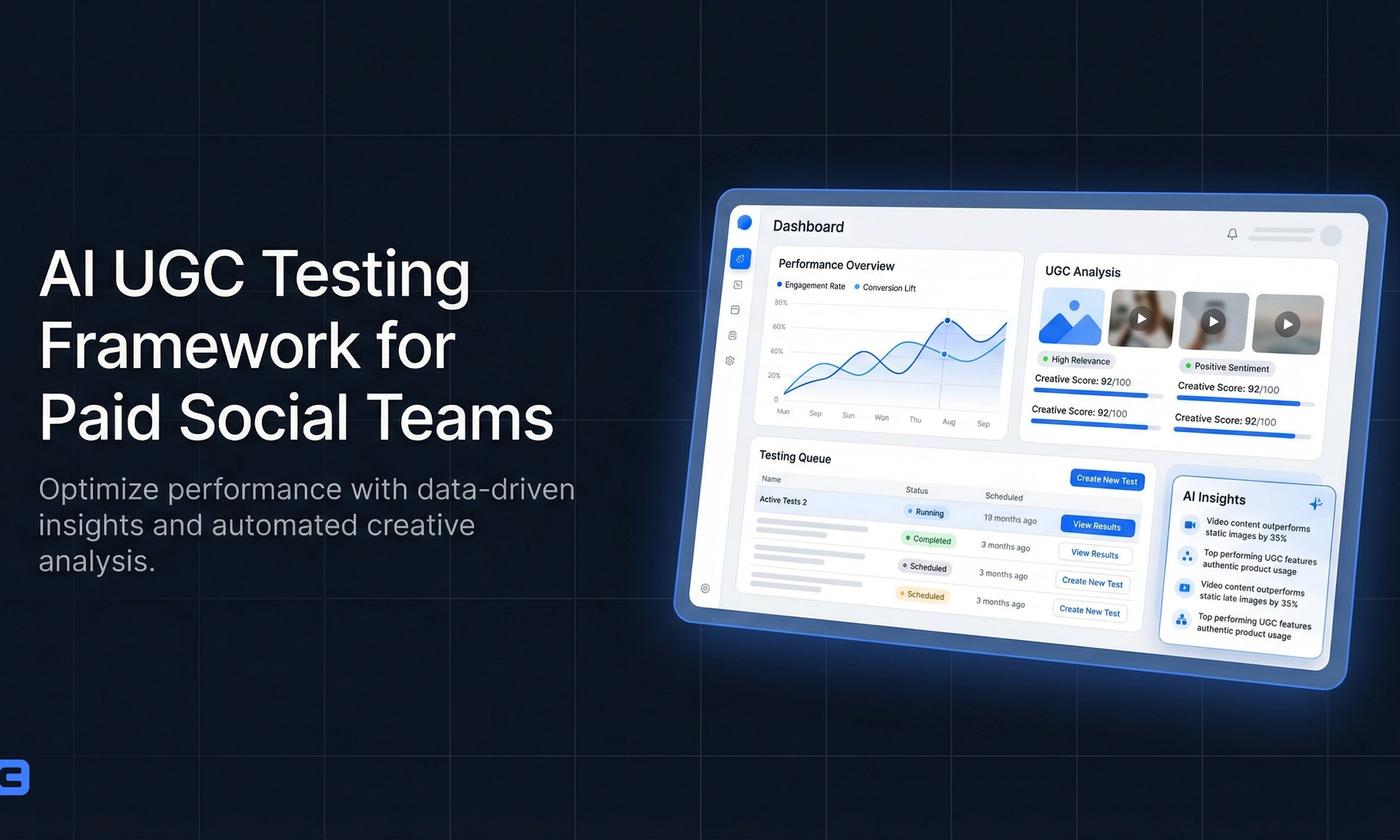

A simple, repeatable AI UGC testing framework that helps Meta and TikTok teams ship more creative in less time, keep tests apples-to-apples, and find...

Why an AI UGC testing framework beats “make 10 ads and pray”

Paid social punishes ambiguity.

If you change the hook, the offer, the creator, the edit style, and the landing page all at once, you don’t learn anything-except how to spend money faster.

An ai ugc testing framework is just a way to isolate variables so you can answer one question per test, in days (not weeks), with a budget you can defend.

If you’re already producing AI UGC, start here: https://www.ezugc.ai/ai-ugc.

The core idea: one variable per test, one decision per week

Your goal isn’t “more creatives.”

Your goal is faster decisions.

A good weekly rhythm:

- Monday: pick one hypothesis (usually hook or angle)

- Tuesday: produce variations (scripts + edits)

- Wed–Fri: run controlled tests

- Friday: declare winners, roll into scaling or iteration

This keeps production and media aligned, and it prevents the common failure mode: shipping a pile of ads with no clear next step.

The 3-layer testing stack (Hook → Angle → Execution)

Think of testing as a funnel.

Start wide at the top (hooks), then narrow into what actually deserves polish.

Layer 1: Hook testing (fastest, cheapest learning)

Hooks are your biggest lever on Meta and TikTok because they decide whether anyone watches the next second.

Test hooks before you obsess over perfect B-roll.

What to hold constant:

- Same product promise

- Same offer (or no offer)

- Same video structure (talking head → proof → CTA)

- Same length bucket (e.g., 20–25s)

What to vary:

- First 1–2 seconds only

Practical example (TikTok):

- Hook A: “I didn’t expect this to work for dry skin…”

- Hook B: “If your moisturizer pills, do this instead.”

- Hook C: “3 reasons your skin barrier isn’t improving.”

If you want speed here, generate hook sets in minutes with https://www.ezugc.ai/tools/ai-hook-generator.

Layer 2: Angle testing (what story sells the product)

Once you have 1–2 hooks that reliably earn attention, you test why people should care.

Angles for DTC usually fall into:

- Problem/solution

- “New mechanism” (a different way to explain the product)

- Social proof / review-led

- Comparison / “instead of” behavior

- Routine / day-in-the-life

Practical example (Meta Reels for a supplement):

- Angle 1: “Afternoon crash” fix (energy stability)

- Angle 2: “Gut-first” framing (digestion + mood)

- Angle 3: “Travel routine” (consistency + convenience)

Hold constant: hook style, creator type, length, CTA.

Vary: the middle 10–15 seconds (the argument).

Layer 3: Execution testing (editing, creator, format)

Execution matters, but it’s expensive to test early because it multiplies production time.

Only test execution after you’ve found an angle that’s already working.

Execution variables worth isolating:

- Creator type (expert vs peer)

- Format (talking head vs voiceover + captions)

- Proof style (demo, UGC review, screenshots, before/after where compliant)

- Editing pace (fast cuts vs calm)

If you’re building AI-generated UGC videos quickly, see https://www.ezugc.ai/features/ai-ugc-video.

The “Comparable Test” checklist (so your results mean something)

Most teams don’t have a testing problem.

They have a comparability problem.

Use this checklist before you launch:

Keep these constant

- Objective: same optimization event (e.g., Purchase)

- Placement bucket: don’t mix wildly different inventories in the same read (e.g., TikTok Spark vs non-Spark)

- Audience: same broad vs same lookalike; don’t change both

- Offer: same discount, same bundle, same free shipping logic

- Landing page: same URL and on-page message

- Video length range: don’t compare 8s to 45s unless that’s the explicit variable

Change only one thing

If you can’t describe the test in one sentence, it’s not a test.

Example:

- Good: “We’re testing 6 hooks with the same angle and edit.”

- Bad: “We’re testing new hooks, new creators, and a new bundle.”

A weekly plan you can run with a small team

You don’t need a studio.

You need a cadence.

Week structure (example)

Step 1 - Build a hook bank (30 minutes):

- Pull 10–20 hook patterns from comments, reviews, and competitor ads.

- Write 6–12 hook lines for one product.

Step 2 - Produce a tight batch (2–4 hours):

- 6–12 videos, same structure.

- Only the first 2 seconds differ.

Step 3 - Launch a controlled test (same day):

- Meta: put them in the same ad set so delivery conditions match.

- TikTok: group them in the same campaign structure so you’re not comparing different learning states.

Step 4 - Decide quickly (end of week):

- Kill obvious losers.

- Promote 1–2 winners into angle tests next week.

The win is speed: you stop spending a full week debating creative that should’ve been cut on day two.

What to measure (without pretending you have perfect attribution)

Paid social metrics are noisy.

So pick a small set that matches the layer you’re testing.

For hook tests

- Thumbstop / 2-second view rate (platform-specific)

- Hold rate (does the first 5 seconds keep people?)

A hook can “win” even if CPA isn’t best yet-because you haven’t tested the selling argument.

For angle tests

- CTR (outbound where available)

- CVR on the landing page (if you can segment)

- CPA / MER directionally

Angles should improve intent, not just views.

For execution tests

- CPA stability at higher spend

- Frequency tolerance

- Comment sentiment and quality (are people asking the right questions?)

Execution winners tend to scale smoother.

Practical creative templates (Meta + TikTok)

You can run these as AI UGC scripts with the same skeleton and swap only the variable under test.

Template 1: Problem → proof → payoff (20–25s)

Hook (test variable): “If you’re doing X, stop.”

Problem (3–6s): Name the frustration in plain language.

Proof (6–15s): Show the product in use + one credible reason.

Payoff (15–22s): What changes in their day.

CTA (last 2–3s): “I grabbed it from [brand]-link below.”

Template 2: “3 reasons” list (15–20s)

Hook: “3 reasons your [goal] isn’t happening.”

Reason 1/2/3: Each is one sentence, one visual.

Close: “This is what I use instead.”

This works well on TikTok where list formats match consumption.

Template 3: Review-led (12–18s)

Hook: Quote a real review line (or paraphrase it).

Body: Show the product and explain why that review makes sense.

Close: “If you have the same issue, try it.”

If you want to sanity-check what other teams think of AI UGC workflows, see https://www.ezugc.ai/reviews.

Common mistakes that waste budget

Testing too many variables at once

You get “interesting” results you can’t act on.

Declaring winners too late

If you wait for perfect certainty, you’ll keep mediocre ads alive and starve the next batch.

Overproducing before you have a message

Polish doesn’t fix a weak angle.

Ignoring the comment section

Comments are free research.

They tell you objections, alternative uses, and language you should steal (ethically) for the next round of hooks.

FAQ

How many hooks should be tested each week?

Start with 6–12 hooks per product per week.

That’s enough variety to find signal without turning production into chaos. If your team is small, do 6; if you have bandwidth, do 12 and keep everything else identical.

What defines a winning AI UGC creative?

A winner is a creative that:

- Earns attention early (strong first 2 seconds)

- Keeps the promise consistent through the middle

- Produces stable results when you add spend (not just a lucky day)

In practice: a winning AI UGC ad is one you can confidently iterate-new angles, new proofs, new CTAs-without rebuilding from scratch.

How do teams keep tests comparable?

They lock the environment and change one variable:

- Same objective, audience type, offer, landing page, and length bucket

- Same campaign/ad set structure for the test group

- Same reporting window

Then they label each creative with the variable under test (e.g., HOOK_05 vs HOOK_06) so the next decision is obvious.

Where to go next

- https://www.ezugc.ai/ai-ugc

- https://www.ezugc.ai/features/ai-ugc-video

- https://www.ezugc.ai/tools/ai-hook-generator

- https://www.ezugc.ai/reviews

FAQ

How many hooks should be tested each week?

Start with 6–12 hooks per product per week. That’s enough variety to find signal without turning production into chaos. If your team is small, do 6; if you have bandwidth, do 12 and keep everything else identical.

What defines a winning AI UGC creative?

A winning AI UGC creative earns attention in the first 2 seconds, keeps the promise consistent through the body, and stays stable when you increase spend (not just a lucky low-spend result). The real test is whether you can iterate from it predictably-new angles or proofs-without performance collapsing.

How do teams keep tests comparable?

Keep the environment locked and change one variable: same objective, audience type, offer, landing page, and length bucket; run the creatives in the same campaign/ad set structure; use the same reporting window; and label each creative by the variable under test (e.g., HOOK_05 vs HOOK_06) so results are apples-to-apples.

Start creating

If you want to ship faster, start here: Create with EzUGC.

Sources and citations

- Wyzowl video marketing data · Wyzowl

Benchmarks for video marketing adoption, ROI, and buyer behavior.

- Adobe AI and Digital Trends report · Adobe

Current AI-driven customer-engagement and content-experience benchmarks.

- HubSpot video marketing statistics · HubSpot

Channel and format-level video-performance benchmarks for marketers.